Research Zeilinger

When safety is critical, control systems are traditionally designed to act in isolated, clearly specified environments, or to be conservative against the unknown. The goal of our research is to make high performance control available for safety-critical systems that act in varying, uncertain environments, and which are potentially large-scale, composed of numerous interconnected subsystems, and, most importantly, involve human interaction.

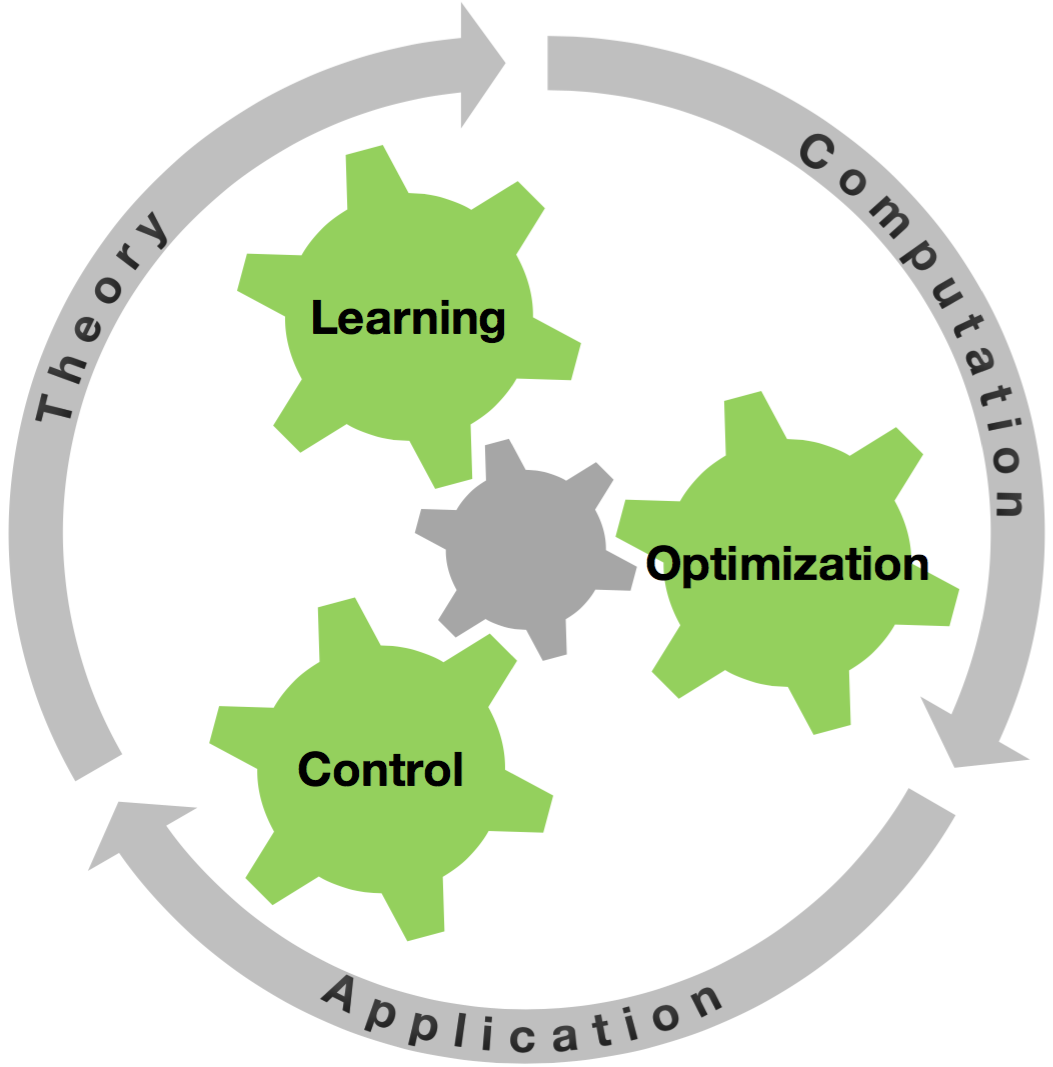

A new opportunity to address these challenges is offered by modern computing, sensor and communication technologies, providing increasing computational power and data at previously unseen detail and scale. We develop intelligent control systems that can exploit data, while offering system theoretic guarantees. Our research integrates control, learning and optimization to provide new theoretical frameworks and highly efficient computational tools in three core areas.